Earlier this year I set some goals for myself, one of which was certification-related. For the sake of learning something new and with my VMware VCP-DCV expiring later this year, I want to try and recertify on a different track. It has been a bit of a toss-up between VMware NSX and VMware Horizon, but given that I implemented Horizon at work recently, I will be going that route.

My home lab consisted of three Intel NUCs, which is great, however, I was running into some issues. First off, I seemed to keep running into all-flash vSAN issues. I suspect this is related to the Intel NVMe’s that I’m using. It also doesn’t help that my hardware isn’t on the HCL, but I haven’t dug too deep into it. The second issue that I found was that although three hosts are great, a fourth would be helpful, even if just for management. Despite having a FreeNAS that I use for shared storage, running it over 1 GbE isn’t ideal. With that in mind, I knew that I needed to solve storage issues first, followed by a fourth host, if possible.

ioFABRIC FOR THE WIN

One of the many IT-related groups that I belong to is the ioPROs, a group run by ioFABRIC. If you haven’t read my overview of their solution, I suggest giving it a read, as their product (Vicinity) is definitely not your convention software-defined storage. As luck would have it, ioFABRIC had announced a design contest where ioPROs could submit their own designs and the best design (as voted upon by fellow ioPROs) would win some shiny new NVMe storage. I took this as an opportunity to figure out how I could solve the problems noted above. Documentation is never fun, but if it can lead to some new NVMe storage, then count me in. I am happy to say that I have won the contest, so I wanted to highlight the design that I submitted.

ARCHITECTING THE DESIGN

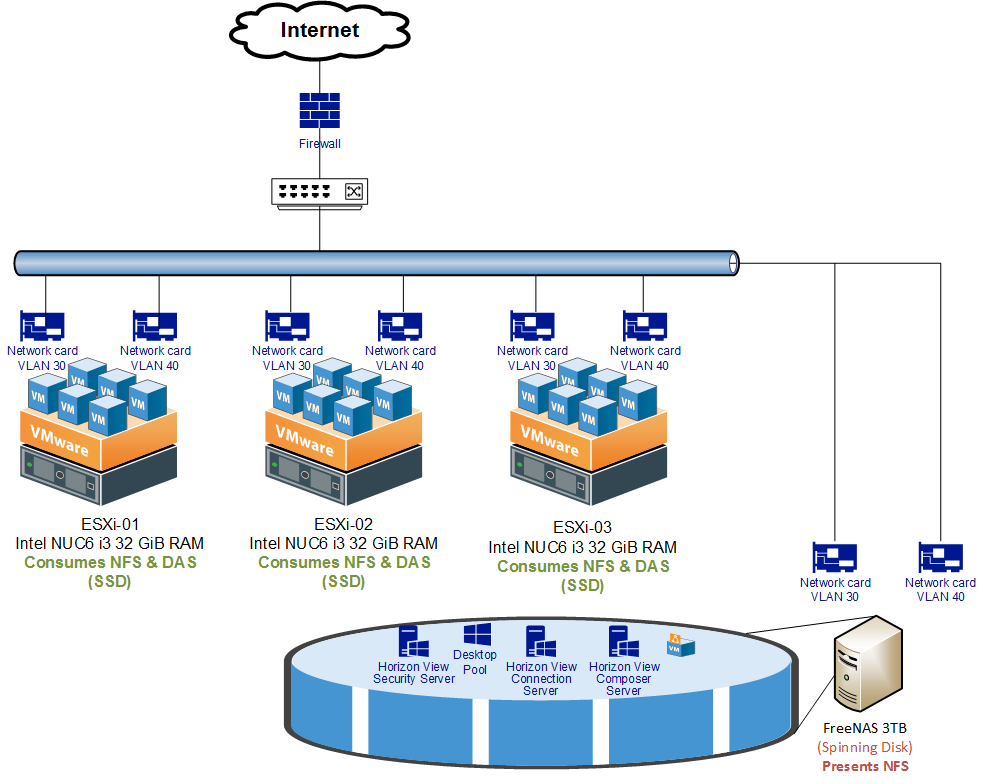

So to recap from above, here is a simplified look at what my home lab looked like before.

- 3 x Intel NUC (with local flash and NVMe)

- 1 x FreeNAS (serving NFS and iSCSI)

- 1 GbE connections throughout

Challenges:

- Poor storage performance

- Poor storage utilization

- No dedicated management node

MANAGEMENT HOST

Homelab before ioFABRIC

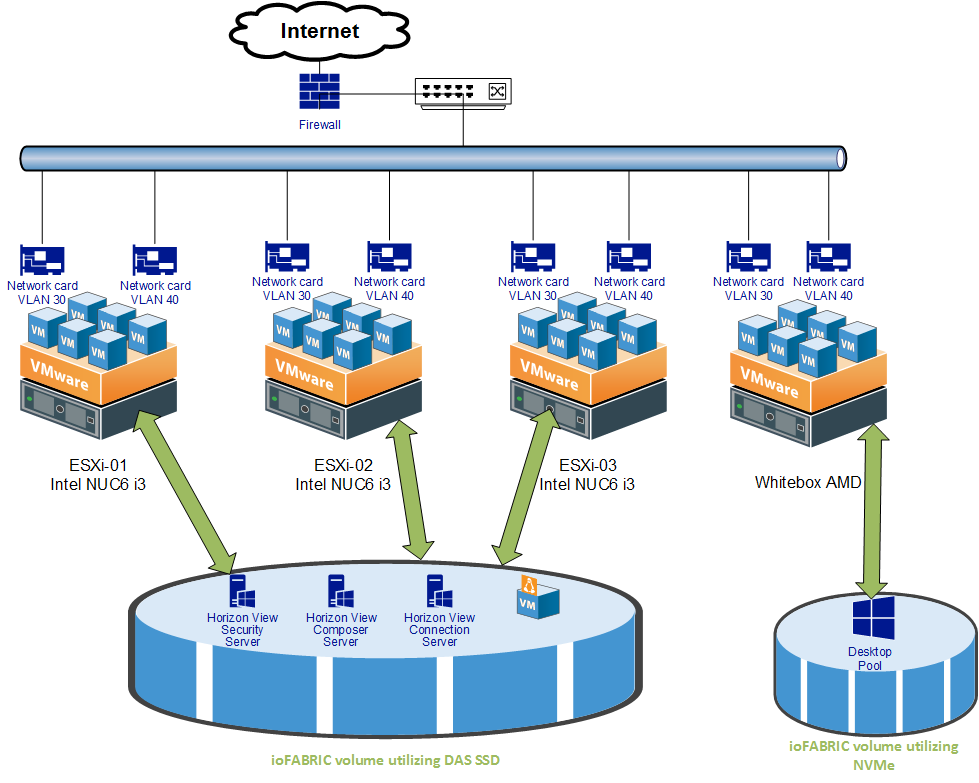

One of my major goals was to also not blow the budget; the budget was essentially to try and not spend any money :). I have a 2 year old AMD FX desktop with 6 cores and 16 GB of RAM. Not scorching-fast by any means, but this is decent enough to run something like the VCSA on. Luckily I also had some Amazon.com gift cards in my account. By using them I was able to purchase another 16 GB of RAM. Adding a 32 GB management host will be sufficient for what I am trying to do. This host will run management functions, such as vCenter and Vicinity’s Manager node. So that takes care of the fourth host.

STORAGE

How about storage? As I mentioned earlier, the NFS setup with FreeNAS works OK. But, it just doesn’t have the IOPS that I am looking for. This is largely due to the fact that it is running off of four spinning disks and no SSD for capacity. Luckily ioFABRIC seems like a good fit for this solution. Each Intel NUC has local storage in the form of SSD and NVMe. My plan is to deploy an ioFABRIC data node to each host, consume the local disk space, and then build out a volume based off of it. With the built-in orchestration, this should give me the capacity I need as well as improved performance when compared to going across the network for NFS.

Homelab after ioFABRIC

Lastly, with the NVMe that I won, I plan on getting a PCI to M.2 interface card and popping it into that AMD desktop. Once that is in there, I’ll create my VMware Horizon desktop pool on that storage pool. I figured that that with the spinning up of new desktops, that workload will likely be the heaviest.

RECAP / tl;dr

I went with this approach because ….

- I had a halfway-decent desktop that I could add as a fourth host;

- NVMe will be useful for the desktop pool as it would require the most IOPS;

- IoFABRIC will let me use local-storage on each host, but also leverage my FreeNAS for a slower / archive tier.

Hopefully I’ll have a follow up post after I sort everything out.